Betaworks is embracing the AI trend not with yet another LLM, but instead a clutch of agent-type models automating everyday tasks that nevertheless aren’t so simple to define. The investor’s latest “Camp” incubator trained up and funded 9 AI agent startups they hope will take on today’s more tedious tasks.

The use cases for many of these companies sound promising, but AI tends to have trouble keeping its promises. Would you trust a shiny new AI to sort your email for you? What about extracting and structuring information from a webpage? Will anyone mind an AI slotting meetings in wherever works?

There’s an element of trust that has yet to be established with these services, something that occurs with most technologies that change how we act. Asking MapQuest for directions felt weird until it didn’t — and now GPS navigation is an everyday tool. But are AI agents at that stage? Betaworks CEO and founder John Borthwick thinks so. (Disclosure: Former TechCrunch editor and Disrupt host Jordan Crook left TC to work at the firm.)

“You’re keying into something that we’ve spent a lot of time thinking about,” he told TechCrunch. “While agentic AI is in its nascence — and there are issues at hand around success rates of agents, etc — we’re seeing tremendous strides even since Camp started.”

While the tech will continue improving, Borthwick explained some customers are ready to embrace it in its current state.

“Historically, we’ve seen customers take a leap of faith, even with higher-stakes tasks, if a product was ‘good enough.’ The original Bill.com, despite doing interesting things with OCR and email scraping, didn’t always get it right, and users still trusted it with thousands of dollars worth of transactions because it made a terrible task less terrible. And over time, through highly communicative interface design, the feedback loops from those customers created an even better, more reliable product,” he said.

“For now, most of the early users of the products in Camp are developers and founders and early tech adopters, and that group has always been willing to patiently test and deliver feedback on these products, which eventually leap over to the mainstream.”

Betaworks Camp is a three-month accelerator in which selected companies in the chosen theme get hands-on help with their product, strategy, and connections before getting shooed out the door with a $500K check — courtesy of Betaworks itself, Mozilla Ventures, Differential Ventures, and Stem AI. But not before the startups strut their stuff on demo day, May 7.

We got a look at the lineup beforehand, though. Here are the three that stuck out to me the most.

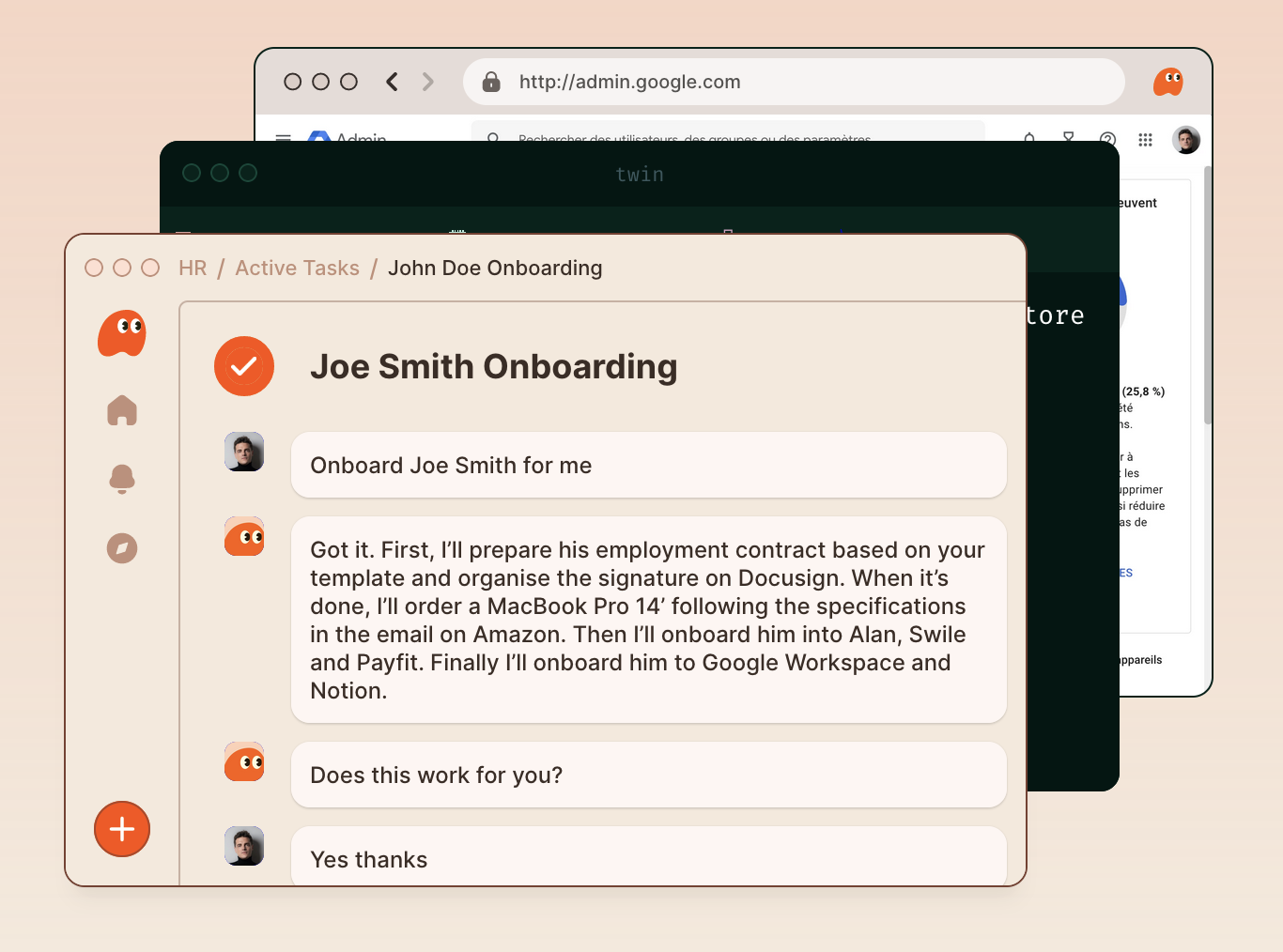

Twin automates tasks using an “action model” the likes of which we’ve heard Rabbit talk about for a few months now (but have not yet shipped). By training a model on lots of data representing software interfaces, it can (these companies claim) learn how to complete common tasks, things that are more complex than an API can handle, yet not so much that they can’t be delegated to a “smart intern.” We actually wrote them up back in January.

So instead of having a backend engineer build a custom script to do a certain task, you can demonstrate or describe in ordinary language. Stuff like “put all the resumés we got today in a folder in Dropbox and rename them after the applicant, then DM me the share link in Slack.” And once you’ve tweaked that workflow (“Oops, this time add the application date to the file names”) it can just be the new way that process works. Automate the 20% of tasks that take up 80% of our time is the company’s goal — whether it can do so affordably is probably the real question. (Twin declined to elaborate on the nature of their model and training process.)

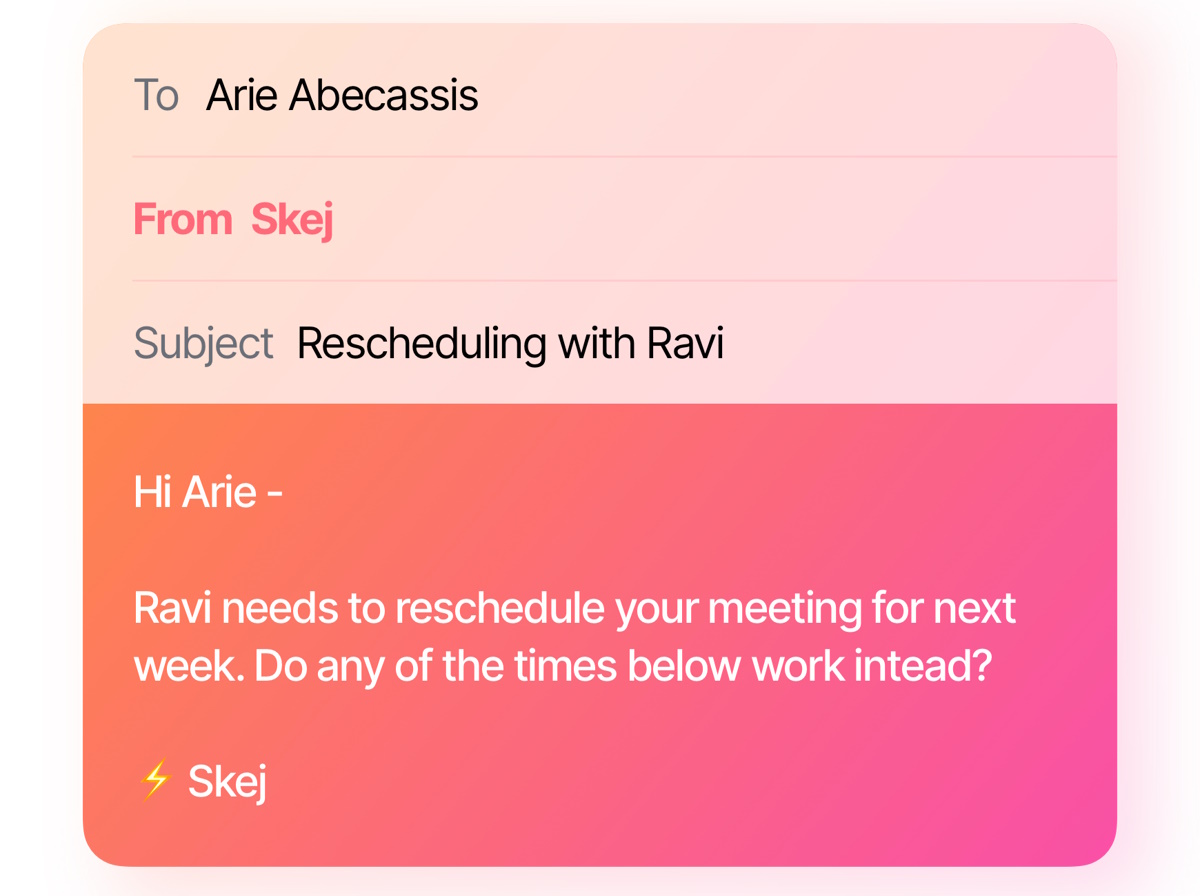

Skej aims to ameliorate the occasionally painful process of finding a meeting time that works for two (or three, or four…) people. You just cc the bot on an email or Slack thread and it’ll start the process of reconciling everyone’s availability and preferences. If it has access to schedules, it’ll check those; if someone says they’d prefer the afternoon if it’s on Thursday, it works with that; you can say some people get priority; and so on. Anyone who works with a skilled executive assistant knows they are irreplaceable, but chances are every EA out there would rather spend less time on tasks that are just a bunch of “How about this? No? How about this?”

Image Credits: Skej

As a misanthrope, I don’t have this scheduling problem, but I appreciate that others do, and also would prefer a “set it and forget it” type solution where they just acquiesce with the results. And it’s well within the capabilities of today’s AI agents, which would primarily be tasked with understanding natural language rather than forms.

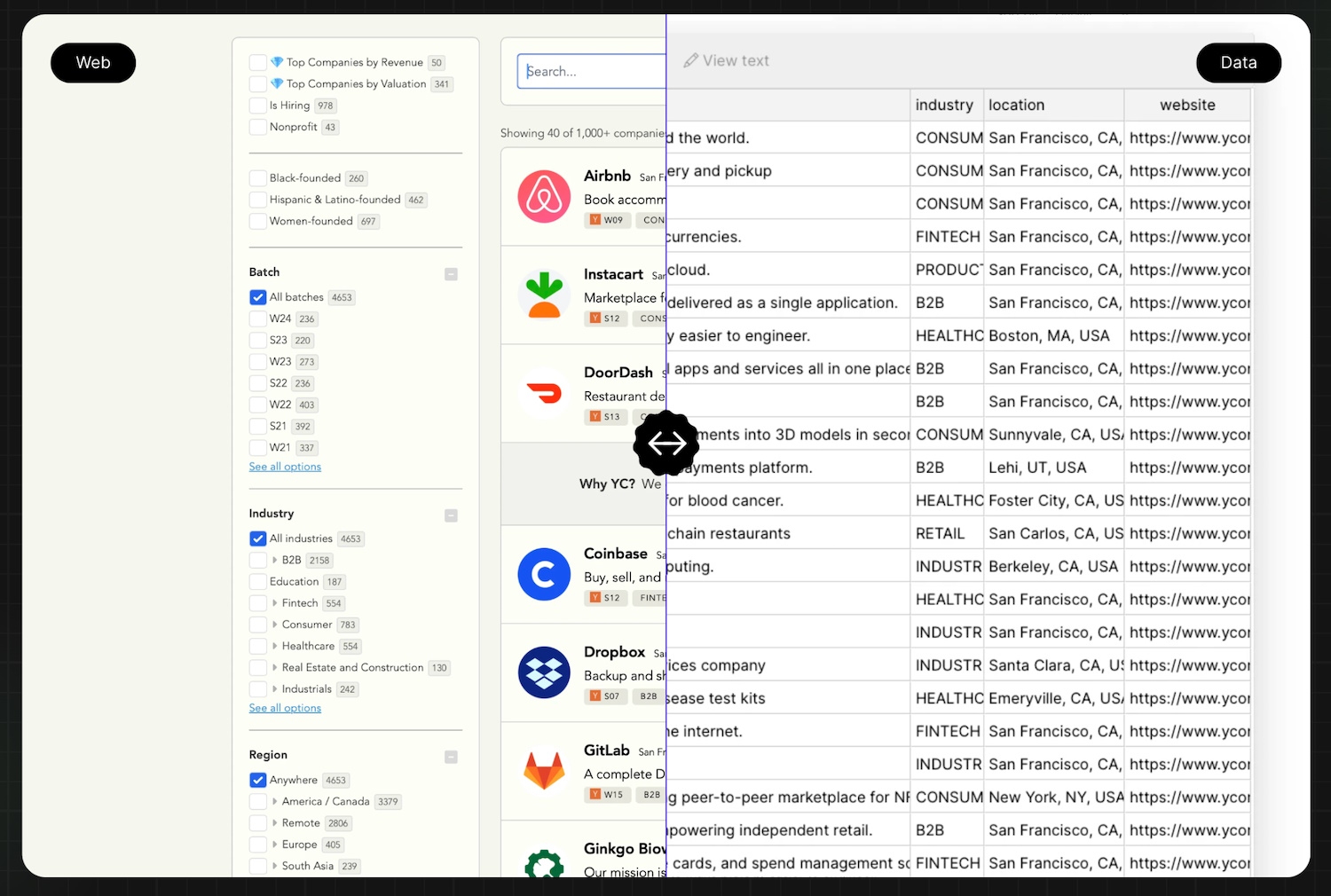

Jsonify is an evolution of website scrapers that can extract data from relatively unstructured contexts. This has been done for ages, but the engine extracting the info has never been all that smart. If it’s a big, flat document they work fine — if it’s in on-site tabs or some poorly coded visual list meant for humans to click around, they can fail. Jsonify uses the improved understanding of today’s visual AI models to better parse and sort data that may be inaccessible to simple crawlers.

Image Credits: Jsonify

So you could do a search for Airbnb options in a given area, then have Jsonify dump them all into a structured list with columns for price, distance from the airport, rating, hidden fees etc. Then you could go do the same thing at Vacasa and extract the same data — maybe for the same places (I did this and saved like $150 the other day, but I wish I could have automated the process). Or, you know, do professional stuff.

But doesn’t the imprecision inherent to LLMs make them a questionable tool for the job? “We’ve managed to build a pretty robust guardrail and cross-checking system,” said founder Ananth Manivannan. “We use a few different models at runtime for understanding the page, which provide some validation — and the LLMs we use are fine-tuned to our use case, so they’re usually pretty reliable even without the guardrail layer. Typically we see 95%+ extraction accuracy, depending on the use case..”

I could see any of these being useful in probably any tech-forward business. The others in the cohort are a bit more technical or situational — here are the remaining 6:

- Resolvd AI – agentic automation of cloud workflows. Feels useful until bespoke integrations catch up to it.

- Floode – an AI inbox wrangler that reads your email and finds the important stuff while preparing appropriate responses and actions.

- Extensible AI – is your AI regressing? Ask your doctor if Extensible is the right testing and logging infra for your deployment.

- Opponent – a virtual character meant for kids to have extensive interactions and play with. Feels like a minefield ethically and legally but someone’s got to walk through it.

- High Dimensional Research – the infra play. A framework for web-based AI agents with a pay-as-you-go model so if your company’s experiment craters, you only owe a few bucks.

- Mbodi – generative AI for robotics, a field where training data is comparatively scarce. I thought it was an African word but it’s just “embody.”

There’s little doubt AI agents will play some role in the increasingly automated software workflows of the near future, but the nature and extent of that role is as yet unwritten. Clearly Betaworks aims to get their foot in the door early even if some of the products aren’t quite ready for their mass market debut just yet.

You’ll be able to see the companies show of their agentic wares on May 7.